See every image back to 2017, at Holiday Greetings Annual [Link]

Category Archives: Thinking Wonder

Mobius Universe

The other bit of fun for 2014 was to finish a thought experiment (the longest thus far: ~1984-2014); coming to a conclusion about the shape of the shape of the universe, and how this could be used to imagine the next contractionary cycle; and perhaps, in time, point the way for far-imagined journeys.

The conclusion? The universe is expanding inwards. Perhaps this might be named “the Mobius Universe”? I made a testing puzzle about this: [link] and there’s a new note here [link].**

The strange thing about constructions like this is that you must look away to observe it. We talk of “the mind’s eye” – This is the imagination’s eye. This is a way to solve many sorts of hard problems. Find imaginative-mind-play time. What you are tussling with, put aside. Accomplish tasks entirely different. Find the focus by looking away. Come back refreshed to tackle it again. Every refresh invites a new perspective. Every leaving gets you farther.

Recharge recharges clarity.

** Original 05Oct2021 version (without “Mobius Universe” title)

Hadley’s Primer

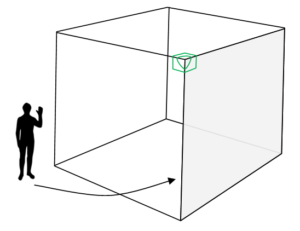

I’m working out lessons to teach how to think in 3D. Here’s one of the first ideas for this. I got to watching Jodie Foster’s movie Contact. And came to the segment where Hadley reveals the primer to Ellie: https://www.youtube.com/watch?v=-SbKE_U4b7U

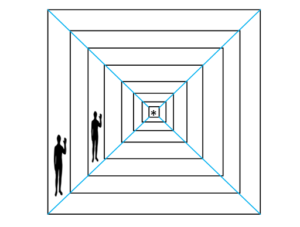

Director Robert Zemeckis needed to explain the idea without detailing the depths that an efficient data set could get to. It gets neat when we replicate the Hadley Primer into a 3D library with many books, layers and leaves:

Director Robert Zemeckis needed to explain the idea without detailing the depths that an efficient data set could get to. It gets neat when we replicate the Hadley Primer into a 3D library with many books, layers and leaves:

This is Zemeckis’ version:

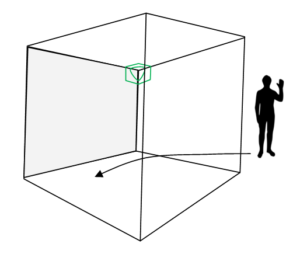

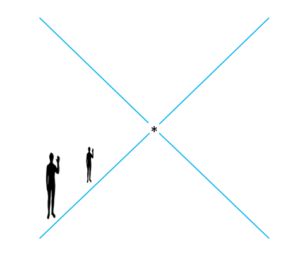

Now imagine the underlay also being a book, inverted:

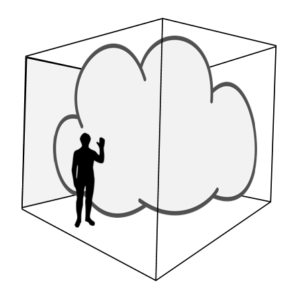

Now imagine Zemeckis’ book as a level-1 hypercube [a tesseract projected into three-space]:

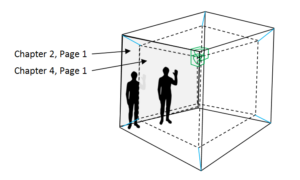

Now imagine it as a multi-layered hypercube…3D books nested within books within books…

Now imagine what lies at the “centre” of the nestlings…

Is it “the centre”? Is it a singularity? Is it a cloud of data? Can we ever get there?

A book with infinitive leaves that “linear we” cannot perceive?

Words in a starry firmament? Words that we cannot truly read?

Yet…